Unlock instant content discoverability with Moments Lab and qibb

Finding the right clip shouldn’t feel like finding a needle in a haystack.

At IBC2025, qibb and Moments Lab teamed up to show how AI-powered metadata enrichment and seamless MAM workflow integration can make every media asset instantly discoverable and ready to work harder for broadcasters, streaming platforms, and sports organisations.

Moments Lab’s multimodal video understanding AI analyses your content to deliver rich metadata, including scene descriptions, people and object recognition, and transcripts. qibb’s low-code media workflow orchestration platform ensures this metadata is automatically mapped back into any MAM from Mimir and GV AMPP to Iconik, Avid, Dalet, and EVS fully aligned to your existing metadata model, without manual re-entry or workflow disruption.

The result? A unified, automated process that turns your archive into a searchable, monetizable resource and a foundation for more advanced, AI-driven workflows.

How the workflow works

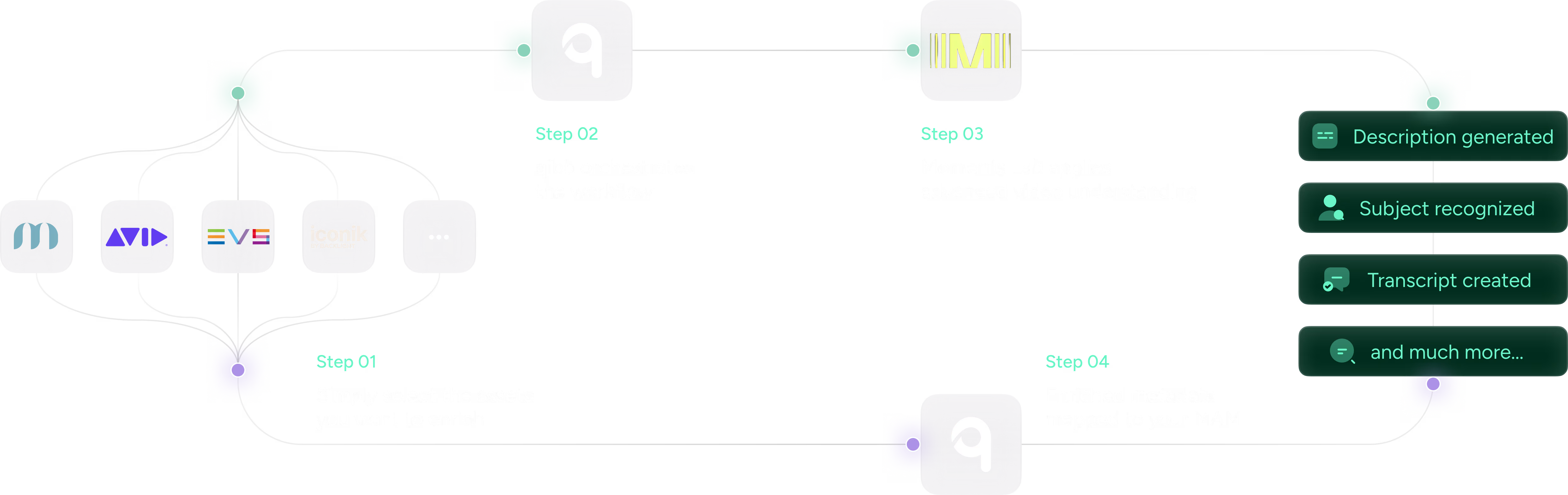

It starts in the place your teams already know best, your MAM. Whether you’re working in Mimir, GV AMPP, Iconik, Avid, Dalet, or EVS, you simply select the assets you want to enrich. That could mean an entire back catalogue, a daily batch of new footage, or a set of clips for a specific project.

From there, qibb orchestrates the workflow — sending your chosen assets to Moments Lab for AI analysis. Together, the platforms apply advanced video understanding to every frame, generating scene descriptions, recognizing people and objects, and creating accurate transcripts. This step transforms raw footage into richly described, searchable media.

qibb then brings it all together, automatically mapping the enriched metadata back into your MAM’s existing model by following your structure, taxonomies, and naming conventions. It’s a completely automated loop, running in the background so your teams can keep working without interruption.

Why it matters

For most media teams, searching an archive can be a frustrating, time-consuming process. Even when the perfect shot exists, it can be buried under inconsistent or incomplete metadata. By combining Moments Lab’s AI capabilities with qibb’s integration and automation, that challenge disappears.

Suddenly, you can locate the exact scene you need in minutes, not hours. You can repurpose and monetize content that was previously hard to find. And because the metadata is consistent and comprehensive, it becomes the foundation for other high-value workflows: automated localization, personalized content delivery, and even fully automated production chains. For broadcasters, streamers, and sports leagues, that means turning years of underused archive content into a searchable, AI-ready library that supports everything from fast-turnaround promos to new discovery and recommendation experiences.

Best of all, you can do this without replacing your existing systems. The qibb + Moments Lab integration works with whatever MAM you already have in place, so you’re adding capability, not complexity.

FAQ

How do Moments Lab and qibb work together to improve content discoverability?

Moments Lab’s multimodal AI analyses video to generate rich metadata such as scene descriptions, people and object recognition, and transcripts. qibb then orchestrates the workflow, sending assets to Moments Lab and mapping the enriched metadata back into your existing MAM model so every clip becomes easier to find and reuse.

Do we need to replace our existing MAM to use Moments Lab and qibb?

No. qibb is a low-code media workflow orchestration platform that connects Moments Lab to the MAM systems you already use, including Mimir, GV AMPP, Iconik, Avid, Dalet, and EVS. You keep your current tools while adding AI-powered metadata enrichment as an integrated workflow.

What new workflows become possible once our archive is enriched with AI metadata?

Richer metadata enables faster clip search for editors, automated localisation and subtitling, personalised content recommendations, and fully or partially automated production chains. Because qibb orchestrates these workflows end to end, you can introduce new AI-driven use cases without disrupting your existing operations.

See it live: book a demo

For most media teams, searching an archive can be a frustrating, time-consuming process. Even when the perfect shot exists, it can be buried under inconsistent or incomplete metadata. By combining Moments Lab’s AI capabilities with qibb’s integration and automation, that challenge disappears.

Suddenly, you can locate the exact scene you need in minutes, not hours. You can repurpose and monetise content that was previously hard to find. And because the metadata is consistent and comprehensive, it becomes the foundation for other high-value workflows: automated localisation, personalised content delivery, and even fully automated production chains.

Best of all, you can do this without replacing your existing systems. The qibb + Moments Lab integration works with whatever MAM you already have in place, so you’re adding capability, not complexity.

qibb today